Yes that’s right, I’ve never used ChatGPT. Yes, me, someone who founded an AI startup based on the new possibilities of LLMs like GPT3.5/4. Not kidding. Neither have I used any of the other similar tools directly, such as Claude, Mistral, etc.

That’s not to say I’ve never used any AI applications that leverage these LLMs. I’ve used them in:

Superhuman

LinkedIn

Notion

Snowflake Copilot

AskEdith

Cursor

A side project of ours that provided safe internet access

And, of course, Delphi

I’ve used image generation models like DALL-E, Midjourney and the one built into Substack. I’ve seen people use ChatGPT on Zoom calls. I’ve seen them use it in VSCode extensions. I’ve read plenty of research and benchmarking about their capabilities… but I still have yet to use one directly.

As someone who was interested in founding an AI startup, I didn’t want to be dazzled by it. I didn’t want to think of it as more than a tool. I didn’t want my mind to be polluted by the hype. Looking at all the crazy press about them a year ago, and studying how they worked, I didn’t want to get involved with all of the excitement. I wanted to think about them with a rational mind. All of the doomsayer talk about this form of technology being AGI, capable of causing mass disruption or becoming sentient, is clearly wrong and I felt so at the time.

That’s not to say that an LLM application couldn’t be destructive. The shadow of Cambridge Analytica and the idea of a similar future application powered by LLMs is a clear and obvious threat. We will only know the effect of these new weapons after the many elections of 2024 happen. The internet was already full of false information, it’s going to become full of well-written half-truth - more pernicious, harder to discern, more polished.

I think of LLMs in a similar way to databases. You make a request and get a response, a query and results. Yes, unlike databases, they work in a seemingly probabilistic way. If you prompt the same version of an LLM, running on the same hardware, with an identical prompt and under the same load circumstances... you should, in theory, get the same result. It feels like AI applications are built around LLMs in the same way that the previous generation were built around Postgres.

Methods like fewshot and conversations can feel like the LLM is learning - like the puppy has learnt a new trick. The truth is that the prompt has been progressively improved until it’s reached success. I’m not immune to the magic - there have been times when we’ve been training Delphi on a new semantic layer, and you feel like it’s genuinely been like a puppy training session. It’s learnt from being corrected, then the next time it’s asked a similar question it answers correctly… again though, I know that this is engineering.

However, I think that it’s probably safe to have a play and see what I think, given my change in circumstances and new role as VP of AI at Cube. Am I a fraud to never have directly touched the technology my role is based upon? Or was it wisdom to only have seen other applications that could inspire what I wanted to build…

Let’s give it a spin, whilst comparing it to the tools I have experienced:

GPT-4 vs Superhuman AI

Prompt I entered:

Write an email to Brian about a new AWS product we heard about on a customer call, called Redlake, which supposedly allows you to query parquet/iceberg using Redshift. Has he heard about it from our reps? If so does he know if need to build a new integration to support in Cube, or does it use the same API as Redshift? Other relevant questions about a new data warehouse technology.

Superhuman AI output:

Hi Brian,

Hope you're doing well! I wanted to reach out to you about a new AWS product called Redlake that we heard about on a customer call. It seems like an interesting solution that allows you to query parquet/iceberg using Redshift.

Have you heard anything about this from our reps? If so, I was wondering if you know whether we need to build a new integration in Cube to support Redlake, or if it uses the same API as Redshift. It would be great to get some insights from you on this.

Also, since it's a new data warehouse technology, are there any other relevant questions we should be asking or considering?

Looking forward to hearing your thoughts!

Superhuman AI output, after choosing post-processing option to rewrite in my voice:

Hey Brian,

How's it going? I wanted to chat with you about this new AWS product called Redlake that came up during a customer call. It seems pretty interesting as it allows you to query parquet/iceberg using Redshift.

Do you know if our reps have mentioned anything about it? Specifically, I'm curious if we need to create a new integration in Cube to support Redlake or if it uses the same API as Redshift. It would be awesome to get your insights on this.

Also, since Redlake is a new data warehouse technology, are there any other important questions we should be asking or things we should be considering?

Looking forward to hearing your thoughts!

GPT-4-Turbo output:

Subject: Inquiry About New AWS Product - Redlake

Hi Brian,

I hope this email finds you well. I wanted to discuss a new AWS product I came across during a recent customer call. The product, named Redlake, has caught my attention as it seems to offer the capability to query Parquet/Iceberg formats using Redshift. This could potentially be a significant development for us, considering our current data handling capabilities and the increasing demand for flexible data warehousing solutions.

Given your close interaction with our AWS representatives, have you come across any information about Redlake? If yes, I'd be interested to know your thoughts on its integration with our existing systems. Specifically, can you confirm whether Redlake requires a new integration for support in Cube, or does it utilize the same API as Redshift? Understanding this will help us in assessing the development efforts required and the feasibility of incorporating this into our service offerings.

Additionally, here are a few other aspects I think we need clarity on regarding this new technology:

1. **Performance and Scalability**: How does Redlake perform in terms of query speed and scalability compared to our current solutions? Can it handle the same workload or better?

2. **Cost Implications**: What are the cost implications of adopting Redlake? Is it competitively priced in comparison to other solutions in the market?

3. **Data Security and Compliance**: Does Redlake meet our data security and regulatory compliance requirements?

4. **Market Penetration and Adoption**: Do we have any insights on how widely Redlake is being adopted or is expected to be adopted by the market?

Your insights on these questions would be incredibly valuable in helping us understand the potential of Redlake and whether we should consider this technology in our roadmap.

Looking forward to your thoughts.

Best regards,

David Jayatillake

[Your Position]

[Your Contact Information]

Thoughts:

OpenAI Chat really fails to capture my voice (it probably doesn’t know who I am very well from its training data. I’m not sure if it has been trained on Substack content) and is also quite verbose. It’s nice that it generates a subject line etc - it’s a full email structure, whereas with Superhuman, it is only the body. It doesn’t have my signature and leaves placeholders, whereas Superhuman doesn’t need to do this.

Another thing it does better though is to generate the “other relevant questions” that I put in the end of my prompt. The first two of these questions are definitely relevant and the last two aren’t bad. I happen to know the answers to them, so wouldn’t have asked. Superhuman AI doesn’t interpret my “other relevant questions” as something for it to come up with, but rephrases instead, which isn’t as good.

All in all, I prefer the tone and length of the Superhuman AI output, but it’s a bit dumber in interpreting the prompt. I don’t know what LLM it’s using, but it probably is a lesser one than GPT-4.

I would have had to change a lot to the GPT-4 output to be comfortable in my skin sending the message. It would have been easier and quicker to write it myself than do this much editing.

For the Superhuman AI (my voice version), I could have thought of the bullets to add as the only real edit. This means Superhuman AI is a more useful output in the right location. There is no way I would always tab out to OpenAI to write an email.

If it was inbuilt into my operating system, like perhaps Apple will do with MacOS/iOS in June... it may be a different story. It could learn from all of my writing and not just my emails. It could know about recent things I've been thinking about, too.

One nice thing about both methods is that they never have the small grammatical mistakes, typos and accidentally missing word issues that I have when writing manually. Actually not needing to check this would be really nice, a setting to just have GPT-4 or Superhuman AI not really change what I wrote, but fix any of these issues and simplify my long sentences would be incredibly helpful.

I don’t know why Superhuman AI isn’t in my voice by default… who else would be using my Superhuman application?

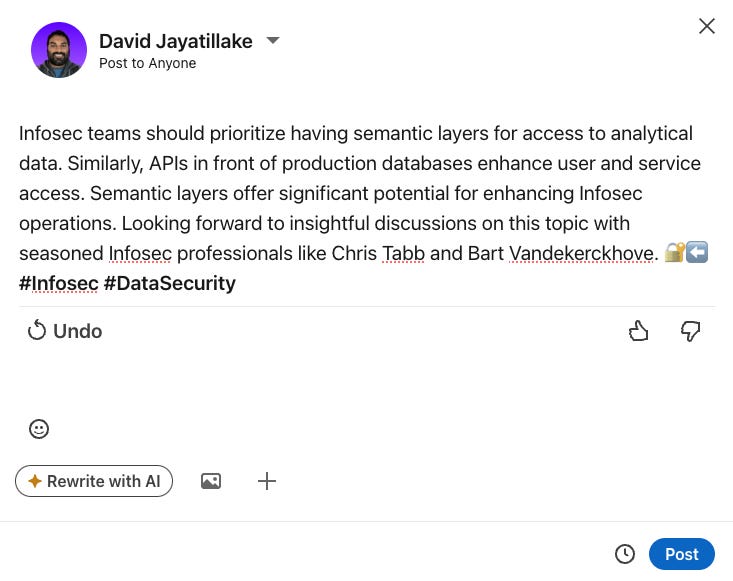

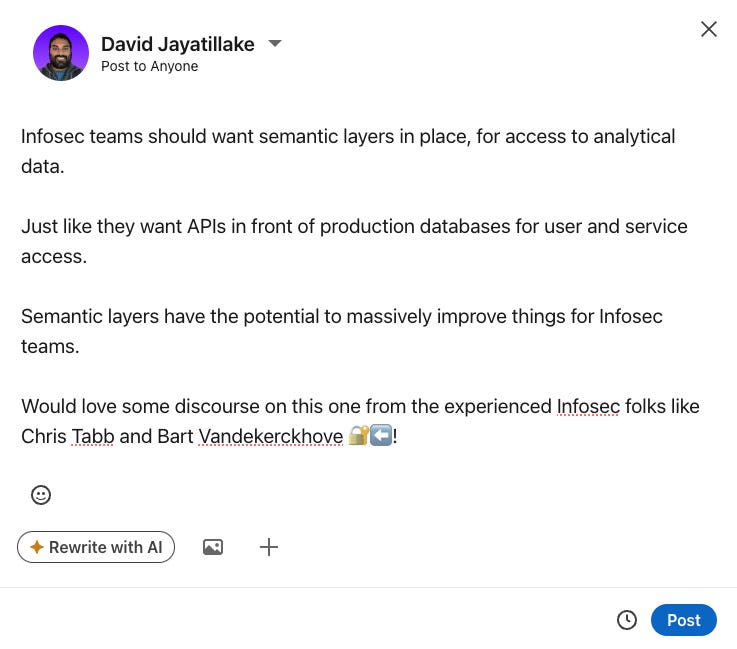

GPT-4 vs LinkedIn AI

LinkedIn’s AI feature is really poor - it feels like a box-ticking exercise, in all honesty. I don’t feel like it’s done very much with my original post and has made the format worse as one large blob of text. The only useful thing it’s done is to add the tags, which are a bit old school now anyhow.

Let’s see how GPT-4 fares:

In case it’s a bit small in the the picture above:

🔒💡 **Calling All InfoSec Professionals!**

In the realm of data security, the integration of semantic layers is becoming as crucial as having APIs for database access. Here’s why:

Semantic layers provide a **controlled and unified access point** to analytical data, ensuring that only the right eyes see sensitive information. This not only strengthens data security but also enhances the quality and reliability of data analytics.

Imagine a world where your data is both **secure and intelligently accessible** - semantic layers make this possible, aligning perfectly with the proactive approach needed in today’s dynamic cyber security landscape.

👨💼👩💼 I’m eager to hear from seasoned Infosec professionals on this! Chris Tabb, Bart Vandekerckhove, and others in the InfoSec community, what are your thoughts on the adoption and impact of semantic layers? Could this be the new frontier for enhanced security protocols?

💬 Please share your insights or experiences below!

#InfoSec #DataSecurity #CyberSecurity #TechTalk #SemanticLayers #DataAnalytics

This is much more like it. It’s well-formatted, it’s taken my relatively terse post and fleshed it out and made it stand out. I actually think I should use this going forward, at the very least for inspiration and structure (maybe I’ll use it to promote this very post).

Conclusion - GPT-4 is the clear winner.

Next week, I’ll continue to compare to other applications and share my overall conclusions.