The Data Chasm

Why are companies struggling with data?

In my previous post, I described how data is of value. I wouldn't have become a cofounder in a data SaaS company if I didn't believe this. I have recently been fundraising and it's been a great learning experience. One of the things that has stood out for me is how skewed my perspective on data maturity is.

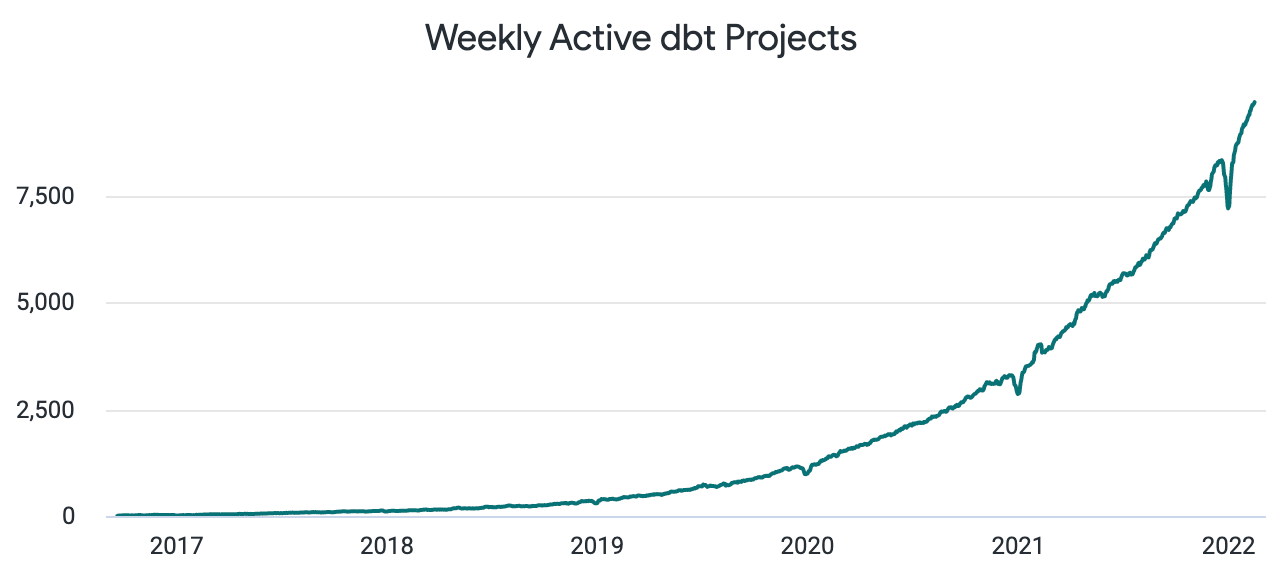

When setting up data teams, I've looked to bigger tech companies for inspiration; this could be on process, approach or stack. This yardstick is high, and led me to believe that average data maturity is higher than it is. I thought that a lot of companies had data teams, processes and stacks at least as good as the ones I had built. We're still at the early adopter stage for a lot of these technologies and methods. There may be ~10000 companies that have taken up dbt, but there are likely 100k that should and 1m that could.

Why do companies struggle with data?

This is a collection of my observations and ideas regarding why companies are struggling. Very few companies, hopefully, will have all of these problems, but all will have some. Some of the behaviours I describe may seem awful or outlandish to some, but I promise they exist and happen every day in many companies. It may not be overt but the motivations are as I describe.

The first step in knowing they could or should use data to make decisions, is one many companies don't take:

It's possible that some companies just haven't considered using data to make decisions. There are many simpler businesses where it's not obvious, but it is still beneficial to use data.

Some business leaders may feel no need to use data to make decisions. They may feel experienced enough to decide what to do without needing data.

Some may not want to use data as it restricts what they can choose to do. Data used to make recommendations is constraining, as it shows some options as being worse. Leaders may not want this, as it empowers others to question their decisions.

Hiring in data is difficult at the moment.

No matter what vendors like me will say, I think the number one problem facing most data teams today is hiring.

There is a lot that goes in to building a good data team. It's very easy to get wrong, and then you've taken two steps back instead of one forward. When this results in poor outcomes for the business, trust is quickly eroded and takes a long time to rebuild. Some businesses choose to hire lone data people and embed them into the business; these people often end up feeling isolated and unsupported, and quickly leave or struggle. This is not always the case - there are some genuine unicorn data people out there who thrive in these situations, but don't count on finding one.

I've personally seen this go wrong or have had to come in and pick up the pieces. It's a huge part of why companies that want to use data fail at doing so. Companies need to get this right or not do it at all. It would be a better approach to spend the budget on consultants or freelancers, than have a poor internal team.

Tech

Some companies are not interested in or not able to use cloud technologies yet, restricting their stack. A lot of pre-cloud technologies are not capable of dealing with the modern scale of data; if you pushed data from a CDP into a legacy on-prem db, it wouldn't cope! Many data practitioners wouldn't want to join a company that was older and pre-cloud, compounding the problem.

Where companies have gone cloud there are still many problems. I have had run-ins with Tech leaders that want to choose the data stack based on their design, rather than what works well for a data team. They may always want the default tool from the cloud vendor, they may want to use tools familiar to them or they may be against OSS.

During the "Big Data" era which preceded the MDS, the concepts of ELT over ETL and Data Lakes became prevalent. Some of these projects on Hadoop took years, spent millions and delivered nothing. They spent most of their time on data engineering and pipelines to get data from every possible place they might need. Some of the projects never got past this point - they never fulfilled any end use cases that delivered value. Younger data practitioners don't even know what Hortonworks/Cloudera/MapR were, let alone identify as "Hadoop Refugees". Spark/Databricks is one of the last parts of that era that has been adapted into the Modern Data Stack; even this has been called a $38B mistake. Unnecessary complexity in tooling and projects, and a lack of focus on business value is a huge challenge in Data.

Tech teams often misunderstand the data discipline:

Tech teams are often governed by Product Managers, who can be their sole connection to the business. This doesn't work well for data people, who need to be much closer to the business to thrive.

Unlike engineering, data work is often undertaken not knowing the full requirements or feasibility up front. T-shirt sizing is more difficult with data, as you are reasoning about the systems and the data produced. It's much less obvious that what you've built is correct with data than with engineering. It may have run, but is it correct? Testing is also much less mature with data than with engineering, compounding the issue.

Legacy tech teams often built services in a way where they would build as close to a finished product as they could. They would then only maintain and occasionally add features if needed. I have seen these approaches foisted onto data, where new use cases arrive and old ones are changed frequently. Data is incompatible with this way of thinking, and data teams often end up "going rogue" to solve use cases. This leads to duplicate metrics, systems that don't scale, inefficiency...

Tech teams often prioritise delivering a feature over ensuring associated data collection works. I have seen features launch without tracking to allow earlier launch. It's hard, if not impossible, to fix data after the fact, but data is rarely prioritised in many companies.

Politics, Leadership and Culture

Many of the most vocal members of the data community at large, work at organisations that have achieved a great culture. Most organisations don't have a great culture!

Many have low talent density which is protected by favouritism and cliques. Many have a lack of openness and trust in the organisation, perpetuated by political and self-interested staff in leadership. Many have poor leadership that can't lead or manage their staff well. Many are just mediocre in culture... not particularly bad and not great; I believe org culture is roughly normally distributed in terms of standard. Many are not focused on culture at all, and are purely focused on revenue and profit.

While we have companies and organisations in the world, the standard of culture and technical ability in them will remain normally distributed. The vast majority of data practitioners won't work in companies at the better end of the distribution. We need to be realistic about this as a data community, and enable ways for more companies to win with data. We especially need to enable the majority of companies in the middle of the distribution. Many data practitioners from the innovators part of the distribution are founding companies for this purpose.

Success in data is particularly dependent on culture, even more so than in other disciplines. For data to have impact in an organisation, there needs to be a minimum level of openness, honesty and willingness to listen to others. Leadership at successfully "data-driven" orgs needs to be particularly open to suggestions. Many business leaders will believe they want to be data-driven but when the data suggests something inconvenient, they will undermine the recommendations to be able to do what they want.

Politically-minded C-suite executives will try to argue why data shouldn't be an independent function. I have seen Finance, Marketing, Product and Tech argue that data should be wholly contained in their areas. They may want to have the influence and possible prestige from an expanded function, or don't understand that it's a true discipline in its own right.

Data should have its own seat at the table, just as a function like Finance does. Data leaders can feel lost and without impact if they don't have this - regularly losing data leadership makes winning with data difficult. This is not an argument against embedding data colleagues in respective business areas. I think business partnering is essential for companies to win with data, in whatever form this takes: embedded or dedicated central resource.

Communication and Context

Many organisations operate as a collection of silos. I've been in companies of different sizes that had specific initiatives to break down these silos. These silos prevent knowledge and even physical data from being widely available . It's common for technical staff in these silos to be entirely inaccessible by staff from other silos. I've personally had to try and understand what data means from another silo, with no context or ability to speak to anyone from there! Even where these silos don't exist in companies, it's not unusual for communication to be sporadic or poor. Success with data, as in any other discipline, requires good communication. Tools such as metadata management platforms and data catalogs aim to help with this, by consolidating this information and relying less on repeated communication.

Documentation is also lacking, but I've come to a place where I only believe a few types of documentation can succeed:

Automatically generated documentation from the engineering process

An integrated Q&A workflow where there are defined data owners responsible for communication about their domains, with both proactive and reactive communication codified in a platform. This way questions are only asked and answered once, until the answer changes because there have been technical shifts.

Understanding of changes to data being decided AND codified as part of the product management workflow. Thus, eliminating the need to recollect this metadata later!

I don't believe in having humans whose job it is to do this manually - that's my take and not everyone will agree.

Benn spoke about how context can be manipulated to make data say whatever you want. There is also a big problem in the industry with lack of any context altogether making it hard to understand data. If a product is clicked on but never converts, its stock status and relative price to similar products is relevant context. In fact, without this additional metadata about the event, you can't really judge the conversion performance. This context provided by metadata is time specific too - things like price and stock status can fluctuate rapidly. Many organisations today don't collect this metadata which enables them to win with their data. Context, instead, is inferred or assumed, and requires staff "who know the business", meaning that they are the source of metadata. Analysts often have reams of metadata about seasonality, one off events, usual drivers of change... locked away in their minds.

I'd love for us to be in a place where lots of companies are ready to adopt metrics layers and freely buy data apps to take advantage of this... but we're not there yet with companies who aren't early adopters or innovators. The tools needed are being built, but they haven't been widely adopted yet. Companies need help to get value from these tools, and this may mean more vendors meeting these customers where they are right now, in terms of data maturity.

Loving how clean, clear and to the point this was!

From my experience as a PM at a leading Catalog vendor, I've learned that the decisive factor for organisations to become data driven is having a strong data leader (CDO, CAO).