Will AI save Data?

…and does it need saving?

I read Marc’s post anticipating that I would find hyper-capitalism, things for people to shake angry fists at… but I found nothing of the sort as I went through the post. I can’t really put my finger on anything I really disagree with. It’s a bet on history repeating - a very good bet, that leads to optimism, in my opinion. So, I’m going to dissect it and explore some of it, specifically relating to data.

Good and Evil

The first person who took a sharp piece of flint and thought, “Hmm, this could be useful”, may have been considering it for a weapon. But they may also have been thinking about using it to cut food. There is nothing inherently evil about the flint: it was the use of it that might be good or evil. The use of it was decided by a person. So, it was the person who chose to act in a good or evil way.

I believe we’re in a world where the choice to do good or evil is still entirely in the hands of people - the application of automation and non-deterministic systems still fit within this choice. It’s a choice of a statistical distribution of good and evil. Even blunt systems cause good and bad outcomes.

Imagine if you had a credit model (perhaps a “rule” is a better word) that said you could only borrow 10% of your income… it doesn’t take into account true affordability or likelihood of default. For some with low living expenses, this is much less than they could really afford to borrow and could restrict them. For others with very high living expenses it could be dangerous to lend them this much, as they have very little disposable income to pay back the debt. We have had more nuanced actuarial-style credit models applied by humans and ML based models too, but they have inescapably also caused good and bad outcomes. In fact, the more blunt the model or rule, the more frequently bad outcomes occur. Our models have been designed to help us cope with the nuance of situations that exist in the real world.

This is true for large language models, too - we have had more blunt systems in the past to do NLP, for use cases like sentiment analysis. LLMs are much less blunt and able to incorporate many levels of context.

First, a short description of what AI is: The application of mathematics and software code to teach computers how to understand, synthesize, and generate knowledge in ways similar to how people do it. AI is a computer program like any other – it runs, takes input, processes, and generates output. AI’s output is useful across a wide range of fields, ranging from coding to medicine to law to the creative arts. It is owned by people and controlled by people, like any other technology.

AI, like flint, has to be applied by people in order to do something - to actuate. It has to be put into some kind of service, given inputs, run, outputs, then used to create something or make a decision.

Ah, but what about the pre-training and then fine tuning? What if we’ve trained the model on the worst type of content, so that it spews forth hatred and misinformation?

Well, who trained the model and who applied it with the intention of doing harm? People. People are to blame for anything AI actuates. People are accountable and responsible, AI can never be. If people misuse AI to break our law, people need to end up on the docket.

AI will make it easier for bad people to do bad things.

In some sense this is a tautology. Technology is a tool. Tools, starting with fire and rocks, can be used to do good things – cook food and build houses – and bad things – burn people and bludgeon people. Any technology can be used for good or bad. Fair enough. And AI will make it easier for criminals, terrorists, and hostile governments to do bad things, no question.

This causes some people to propose, well, in that case, let’s not take the risk, let’s ban AI now before this can happen. Unfortunately, AI is not some esoteric physical material that is hard to come by, like plutonium. It’s the opposite, it’s the easiest material in the world to come by – math and code…

…First, we have laws on the books to criminalize most of the bad things that anyone is going to do with AI. Hack into the Pentagon? That’s a crime. Steal money from a bank? That’s a crime. Create a bioweapon? That’s a crime. Commit a terrorist act? That’s a crime. We can simply focus on preventing those crimes when we can, and prosecuting them when we cannot. We don’t even need new laws – I’m not aware of a single actual bad use for AI that’s been proposed that’s not already illegal. And if a new bad use is identified, we ban that use. QED.

AI is so malleable that you could look at it in a similar way to metal. Metal can be used to build terrible things, but also incredibly useful things that are the literal foundations of our infrastructure as a civilisation. We don’t ban metal because of what it can be used for - we may ban or regulate dangerous use cases. However, these use cases already have governing law: hate speech, warfare and weaponry, theft…

Jobs

This part about the effect on jobs and productivity is very important, which is why I’m quoting such a large chunk:

The fear of job loss due variously to mechanization, automation, computerization, or AI has been a recurring panic for hundreds of years, since the original onset of machinery such as the mechanical loom. Even though every new major technology has led to more jobs at higher wages throughout history, each wave of this panic is accompanied by claims that “this time is different” – this is the time it will finally happen, this is the technology that will finally deliver the hammer blow to human labor. And yet, it never happens.

We’ve been through two such technology-driven unemployment panic cycles in our recent past – the outsourcing panic of the 2000’s, and the automation panic of the 2010’s. Notwithstanding many talking heads, pundits, and even tech industry executives pounding the table throughout both decades that mass unemployment was near, by late 2019 – right before the onset of COVID – the world had more jobs at higher wages than ever in history.

Nevertheless this mistaken idea will not die.

And sure enough, it’s back.

This time, we finally have the technology that’s going to take all the jobs and render human workers superfluous – real AI. Surely this time history won’t repeat, and AI will cause mass unemployment – and not rapid economic, job, and wage growth – right?

No, that’s not going to happen – and in fact AI, if allowed to develop and proliferate throughout the economy, may cause the most dramatic and sustained economic boom of all time, with correspondingly record job and wage growth – the exact opposite of the fear. And here’s why.

The core mistake the automation-kills-jobs doomers keep making is called the Lump Of Labor Fallacy. This fallacy is the incorrect notion that there is a fixed amount of labor to be done in the economy at any given time, and either machines do it or people do it – and if machines do it, there will be no work for people to do.

The Lump Of Labor Fallacy flows naturally from naive intuition, but naive intuition here is wrong. When technology is applied to production, we get productivity growth – an increase in output generated by a reduction in inputs. The result is lower prices for goods and services. As prices for goods and services fall, we pay less for them, meaning that we now have extra spending power with which to buy other things. This increases demand in the economy, which drives the creation of new production – including new products and new industries – which then creates new jobs for the people who were replaced by machines in prior jobs. The result is a larger economy with higher material prosperity, more industries, more products, and more jobs.

But the good news doesn’t stop there. We also get higher wages. This is because, at the level of the individual worker, the marketplace sets compensation as a function of the marginal productivity of the worker. A worker in a technology-infused business will be more productive than a worker in a traditional business. The employer will either pay that worker more money as he is now more productive, or another employer will, purely out of self interest. The result is that technology introduced into an industry generally not only increases the number of jobs in the industry but also raises wages.

To summarize, technology empowers people to be more productive. This causes the prices for existing goods and services to fall, and for wages to rise. This in turn causes economic growth and job growth, while motivating the creation of new jobs and new industries. If a market economy is allowed to function normally and if technology is allowed to be introduced freely, this is a perpetual upward cycle that never ends. For, as Milton Friedman observed, “Human wants and needs are endless” – we always want more than we have. A technology-infused market economy is the way we get closer to delivering everything everyone could conceivably want, but never all the way there. And that is why technology doesn’t destroy jobs and never will.

Just last week, I wrote about very senior IC roles and suggested that there would be a need for fewer junior IC roles in this upcoming LLM era. I almost certainly had a bit of “Lump of Labor Fallacy” (LoLF) baked into that way of thinking, even though this is not the conclusion of my point. The demand for output will just increase, and maybe that means we need 10x as many senior ICs compared to today. However, junior ICs end up like apprentices on the way to becoming senior ICs, rather than as productive workers on their own today.

I think data people are at possibly higher risk of falling into the LoLF, more so than others. We’re so used to looking at the numbers, chopping them up, moving them around… but then reconciling them back to known truths - the numbers need to add up. This way of thinking makes it easy to slip into thinking about production and demand as finite and fixed, like past data.

I had even thought about how demand could break these constraints, but it will also affect supply models. Yes, AI is a multiplier on productivity, but the multiplicative effect isn’t infinite. To meet the exponentially higher demand, we’ll need more people… not proportionally more, because of the productivity multiplier, but still more. Our operating efficiency sky rockets and then slows in growth - we still need people to be able to scale.

Many more people today produce the content accesible via a Google Search, than people who produced content to go in the Yellow Pages, Encarta, libraries before the internet era. They’re a lot better-paid too: we didn’t have independent content creators in the past, as they couldn’t have the reach without a publisher, newspaper or broadcaster before.

I also think the types of activity we do in our jobs and types of jobs we have will improve. Data engineering will cease to be as much of a grind as it is today - many annoying and boilerplate tasks will go away. AI can already be used to generate cron expressions, more or less perfectly. Easy, you say, but next will be regex. What about unit testing? Documentation? Lots of parts of our jobs aren’t glamorous or what we particularly want to do - they are just things that we need to get done… not for much longer. Even more complicated things like writing orchestrator tasks could easily be written with a copilot type AI tool, checked over by an engineer and then pushed.

Does Data need saving?

As much as you could accuse of me of being in the top 13 MDS Zealots, I do recognise Data needs saving. It’s too fragmented: where interfaces are lacking or poor, we plug a new tool in rather than improving the infrastructure. We cling to old tools like a religion because we like the people and orgs that made them and open-sourced them, when there are new ones we should migrate to en masse that would reduce the total number tools we need.

“It’s muscle memory,” you say, but it isn’t for new starters or other people trying to collaborate. We limit our own scale in this regard. The only thing we’ve been able to agree on in the whole diagram is dbt, and even dbt Labs is going through its own night of Skele-Grow.

I may feel like things are OK, because I know which components play nicely together, and how they differentiate if they’re similar components… at least for the components I’ve heard of. For people out there who look at the diagram below in horror, the experience is much different. They end up longing for the old SSMS/Teradata/Oracle days, where everything was provided already integrated together on single billing. No wonder Microsoft are bringing out a tribute act. I’m a fairly risk-seeking person, not everyone in data is like this; many are risk-averse and are afraid to try new approaches at the risk of getting things wrong. We can’t avoid the fact that, in all likelihood, half of data folks and leaders are geared this way, we need to provide them with clarity and safety in choice.

If you want further evidence, there is not just one, but at least four VC-funded startups who make stacks from the large menu above and make sure they play nicely together. Why is it that I know of six different data orchestration tools? There is value in choice and there are great people working in this space, but there comes a point where we’re just adding future pixels to Matt’s face. Please, no more new orchestrators. We already have a legacy one, a good one, a pythonic one, a YAML one, a user-friendly one and another one open-sourced by another data tech darling.

There are many data consultancies who either recommend combinations from this menu, amalgamate them or both. I love and respect many people who run or work in this space, but they would also agree that it shouldn’t be so hard.

We blew up Airflow and are trying to kintsugi it. The golden lacquer was expected to be metadata, but we’re generating complexity in tooling quantity, creating an o(c^n) scale problem in terms of needing things to interface with each other.

As much as I don’t want to return to the days that Microsoft Fabric harks back to, we can’t ask data teams to pay for 20 separate tools - many would only pay for 5 at a maximum; especially where they are having to do the work to make them play well together.

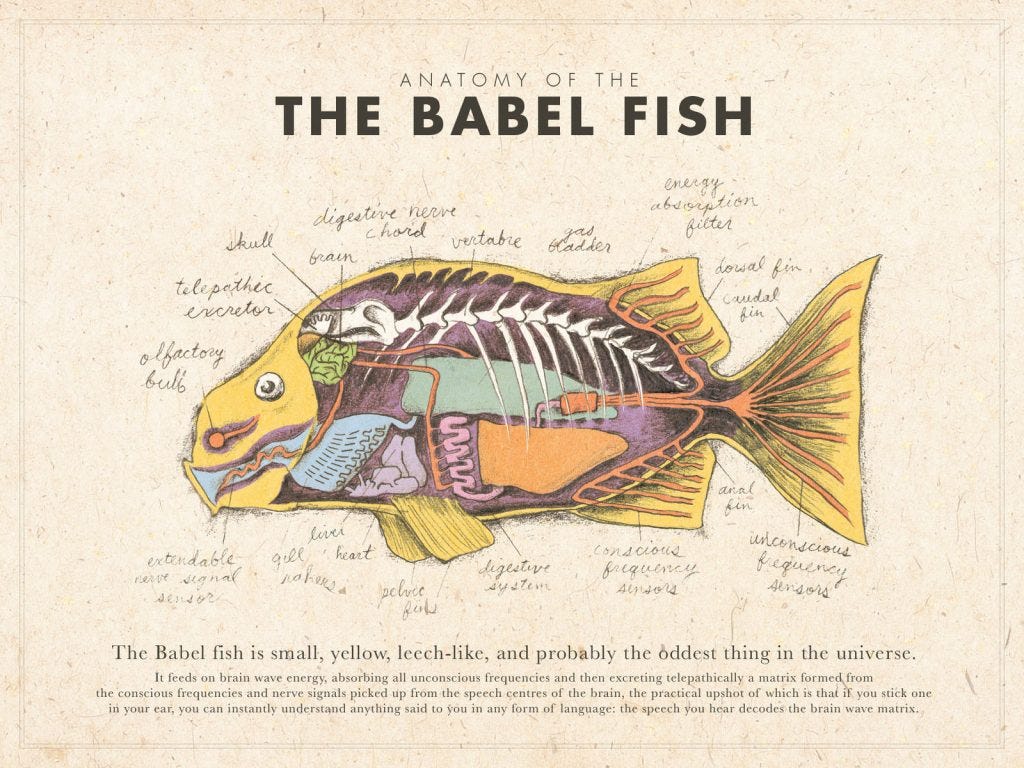

This current generation of AI isn’t like the ML models of the past decade, which could be used for classification and regression. However, LLMs are excellent for interface use cases, from any language to any other language. Natural language A to natural language B, natural language to machine language and vice versa. I hope… I believe that AI with metadata, combined, is the golden lacquer.

As for the people and processes for Data, a lot of the problems here are also interfaces. Data team to stakeholder, hub to spoke, manager to team member, data team to company leadership etc. Clearly, I believe we can improve the first of these interfaces, but I wonder how AI could help with things like distribution of best practices and documentation across distributed data teams, helping stretched line managers aggregate information they’ve gathered over a year into a draft of a review, appraising the value a data team has given to their organisation…

AI being regulated into an oligopoly won’t help things:

As history has shown, us “baptists” can be “bootleggers” in sheep’s clothing.

Big AI companies should be allowed to build AI as fast and aggressively as they can – but not allowed to achieve regulatory capture, not allowed to establish a government-protect cartel that is insulated from market competition due to incorrect claims of AI risk. This will maximize the technological and societal payoff from the amazing capabilities of these companies, which are jewels of modern capitalism.