Yes I know this is a bold statement for a post title, but I believe it.

It’s not because I think people will build LLM based tooling to improve data quality directly in the sense of it helping with engineering. There are a whole myriad of possible applications where this could be possible, starting left of the DAG:

AI suggested data model during product engineering to improve the probability of high quality data being generated by product features.

Where a minority of events have defects that are easy to amend or gap-fill, AI could be used fix.

AI could be used to determine a data contract automatically.

It could generate the expected schema from the product feature change or creation.

It could create an maintain tests to stop code entering production that would generate data that deviates from the expected schema.

It could also make suggested code changes to PRs that are triggering these test failures to solve.

It could summarise the logs, alerts and lineage of failures in prod to explain to engineering teams what happened and what they need to do to fix it.

It could suggest the severity of data quality alerts and decide whether or not to disturb or wake up engineers.

It could decide whether to revert engineering changes and then re-run orchestration jobs, if errors and corresponding alerts occur out of human working hours.

It could decide infrastructure resource config or sizing ahead of possible failure

Increasing prod/replica disk space to prevent WAL file overload on CDC

Increase Snowflake/Databricks cluster size to stop dbt models running for too long due to running our of memory and disk spill.

Sink or stop the posting of a topic to a stream, to avoid the stream processor worker from running our of memory or needing to scale up

Plus many more use cases than I can think of in in a few minutes. Essentially every single atomic thing that a data engineer has to do as part of their job.

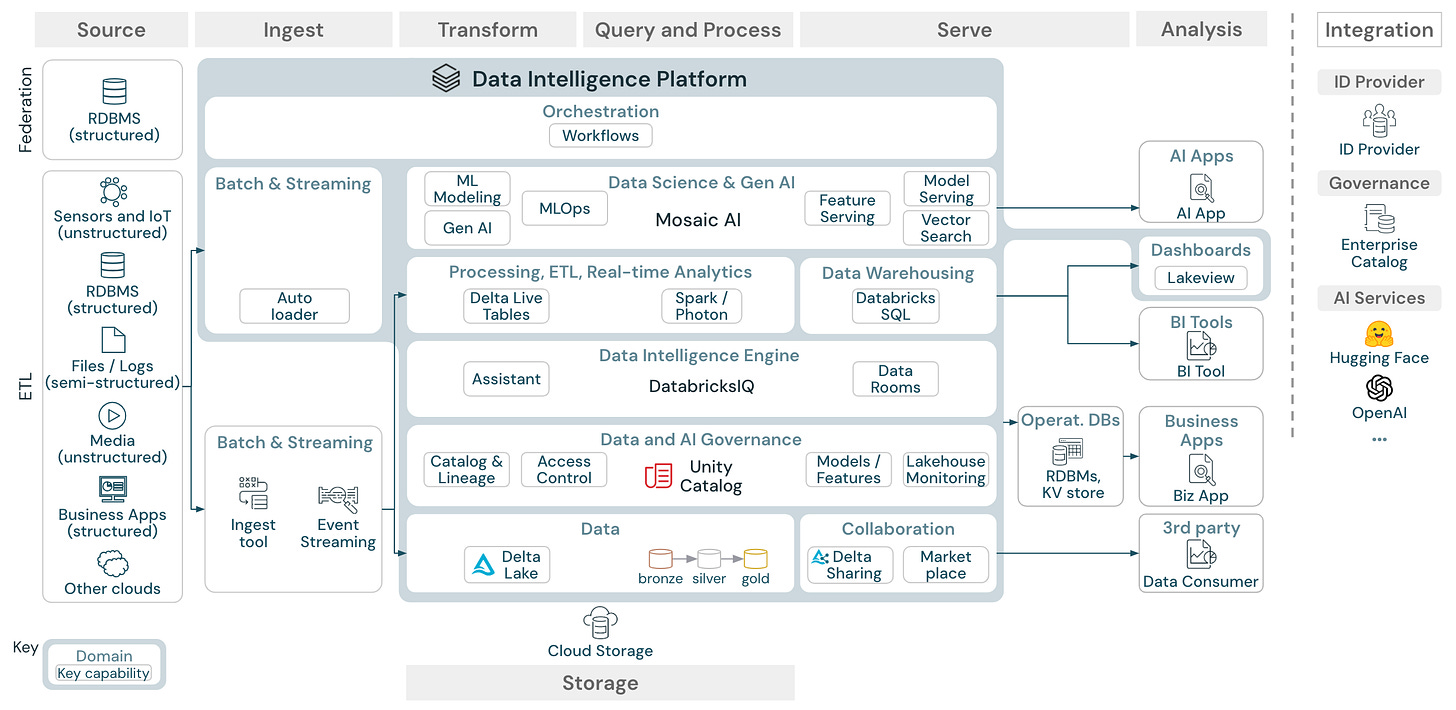

Just look at Databricks’ arch diagram and imagine all the ways AI could intervene, or assist engineers using the platform:

One day soon, and Palantir showed us a glimpse of how this could be possible, every atomic task that a data engineer does will be assisted with an AI Copilot, if it can’t be wholly automated. I believe this will actually increase output for data teams rather than necessarily improve data quality, but maybe it will do both. It certainly won’t replace senior members of staff working in data.

However, none of these things alone or in aggregate are why I think AI will solve Data Quality. Data Quality is much more than just an engineering problem. It’s more of a people, process and prioritisation problem.

As I’ve mentioned in the past, where teams deliver a data product that their customers pay for and rely upon, data quality isn’t a problem. It’s engineered away. Things which cause data quality issues are bugs which for the most part don’t get beyond an IDE let alone CI. Any rare bugs that get into prod are a SEV1 issue and the company drops what they’re doing, gets in an incident room and fixes it.

So the most effective solution to data quality that I’ve seen is priority. If the quality of the data generated is important to the company, the company will engineer and ensure that it is good enough to meet their customer requirements.

Part of the reason that “dashboards are dead”, is not because they’re useless. They have a place in the range of data products possible. A big part of why they’re dead is that often they’re not that important. Whether a dashboard is wrong or up to date, probably doesn’t matter to a company’s success. Dashboards have become an inflexible way to provide answers to too many data questions directed at a data team and their data. Stakeholders are fed up of having to wait weeks for quick answers they want now. Basic data answers delivered in seconds or minutes will be table stakes within the next two years.

Generative AI has already achieved higher adoption than BI, even though it’s less than 2 years old in terms of GTM (no-one really played with GPT-2 beyond researchers, ML folks and insiders). BI is 30+ year old discipline. The same executives who now use LLMs every day whether directly or through applications, will expect the same level of access to their business data. Getting chatbot level ease of access to data is orders of magnitude more valuable to these folks (and their customers) than having dashboards in the medium to long term.

If they ask for an AI interface to data, and we say it will need X level of data engineering, a data warehouse/lakehouse, data transformation… a semantic layer to top it off, they will say “fine, give me a project timeline”. The will to prioritise this type of access in turn prioritises the data/analytics engineering work to deliver it, which in turn requires commitment to data quality in product engineering. Priority will solve data quality. AI is the carrot to drive data quality forwards and upwards.

AI can definitely help especially when writing lot of sql queries for data quality. I'm

Like vanna tool: https://www.junaideffendi.com/p/vanna-empower-teams-through-chat-gpt?utm_source=publication-search