Earlier this week, I ran a Cube webinar where I explained the difference between text-to-SQL and text-to-SL (Semantic Layer) methods for self-serve analytics.

I’ve spoken about this at length before, so I’m not going to rehash why I think text-to-SL is superior. You can read about it here:

A Darker Truth

It was about a year ago that I started the pre-seed fundraising process for Delphi. All of the VCs I spoke to asked me why we would succeed vs all the other entrants in the space. The vast majority of these entrants were using a method called “text-to-SQL”,…

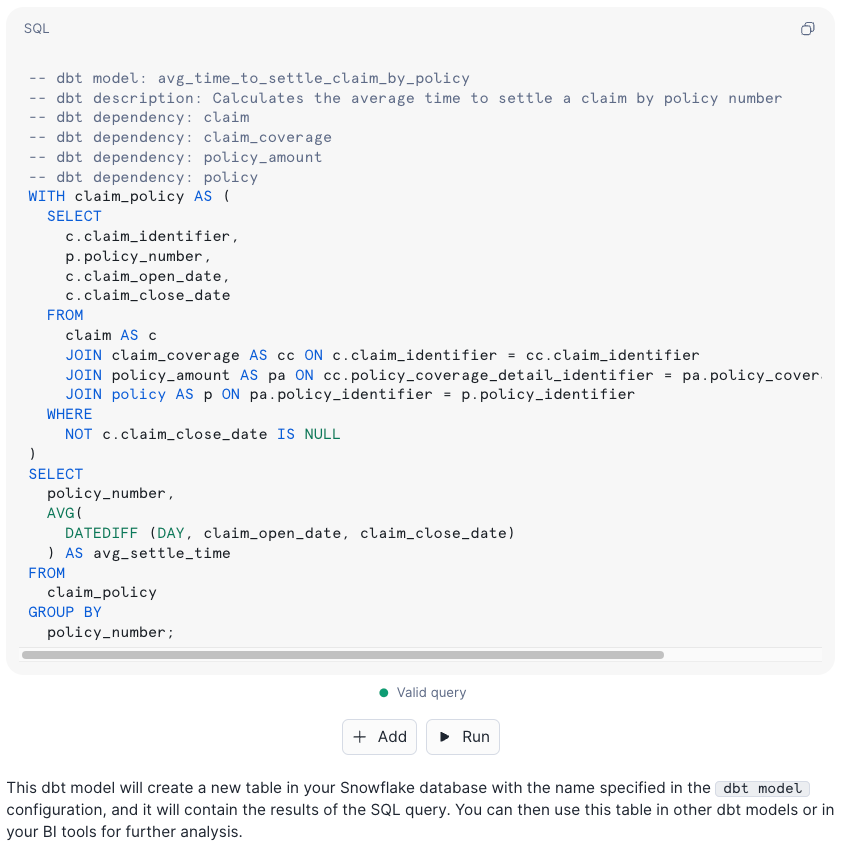

In the webinar, I ran a chatbot-off, where I used a few questions from data.world’s benchmark, as replicated by dbt Labs and then Delphi Labs. I tried out Snowflake’s newly released text-to-SQL Copilot to represent this method and used Delphi to represent the text-to-SL method. As before, when replicating all of the questions dbt Labs had, Delphi was able to answer all the questions correctly. Of the five questions I chose from this set, one was a gimme and the other four were ones that the text-to-SQL tool used for the benchmark got wrong.

This time around, Snowflake’s Copilot managed to answer three of the five questions correctly and one was relatively complex.

It still failed on one due to insufficient metadata and hallucinated on another. As I mentioned in A Darker Truth, you still have the problem of lack of transparency for complex solutions, even when correct.

The benchmark data model is just over 10 entities, so it’s not really that complex and it’s more or less a perfect data model with great names and metadata. So 3 out of 5 on this data model by no means makes it ready for self-serve analytics for non-technical stakeholders.

However, this is still the very best text-to-SQL tool I’ve ever tried by a country mile.

It’s not actually intended for self-serve analytics - it’s meant to be a copilot (it’s in the name). It’s for skilled data professionals who know their company’s data and how to write SQL. For someone like me, who has written or interacted with SQL (if you include dbt and semantic layers) for most days of my professional life so far, and have written my fair share of Snowflake SQL in their query editor, Snowflake Copilot seems excellent. It could have saved me heaps of time writing ad-hoc and exploratory queries. Even the query where it hallucinated was so close to correct that I could have fixed it in seconds.

Even before experiencing Copilot, I had long thought that Snowflake had some huge advantages in making a text-to-SQL tool. Beyond a huge amount of resources in terms of capital and headcount to throw at it, they have one fundamentally huge advantage… Snowflake have every query ever run on Snowflake in their query history.

If you understand how Snowflake is architected, you realise that there isn’t really such a thing as customer instances of Snowflake. There is just one big Snowflake, with a hierarchy that traverses something like world > cloud > region > organisation > account > database > schema > table. Customers just have their own parts of it underneath their organisations, but this organisation is really a collection of accounts which is a collection of databases… a higher schema.

Snowflake, by now, will have billions of queries run and in their query history. The perfect dataset for training a text-to-SQL LLM, beyond pre-training to gain knowledge of the world and language, is a set of pairs of questions with answers. Snowflake’s query history is one half of this dataset - the answers, valid SQL that served a purpose. There is a lot they could do to filter the queries to be considered truly valid answers. Queries run multiple times without edit (dashboard type queries). Queries that were parameterised and run with many permutations of parameters. Queries that were saved in named worksheets…

You might have a very fair question at this point… if they are answers - to what questions are they the answers? How would you possibly acquire these in a scalable way? SQL queries often don’t have a question they were trying to answer written down in the query comments. BI tools run SQL to feed into charts and dashboards without tagging what chart/dashboard they are related to. The question half of the dataset, when expected to be sourced from human teams, is essentially unobtainable. Much of the time, human data folks don’t really know the real question they are answering 🙃.

What if there was another way? What if the original questions being answered by the queries weren’t that important. If you could generate a valid question for each answer, this could make an amazing hybrid synthetic dataset - one that you could generate at scale. This is something LLMs would be great at, creating a question in language=text that would be answered by the valid SQL query. In fact, I believe it would be much better than the reverse route. SQL-to-text is an amazing use for an LLM, much better than text-to-SQL!

In theory, Snowflake could use the tooling and compute they have from their Nvidia partnership to host Mistral models they have got access to from their Mistral partnership and investment. They could then generate questions for their SQL queries in batch and at scale, with no huge token cost incurred from using a public model.

This would generate the perfect training dataset to then create another such Mistral model to do the very best job of text-to-SQL possible! Add to this the benefit that they only have to consider generating their own SQL variant, and their training data only contains their SQL variant.

Not only are text-to-SQL companies fighting a losing battle against the text-to-SL method in general, but even on their own method they can’t hope to compete with Snowflake. Snowflake has what I think is a moat in text-to-SQL - their dataset. It seems as though BigQuery has also gone this way, from their Google Next announcement, but I haven’t had a chance to try it. Databricks are also seemingly going this way.

I’ll be very direct - if you’re running a text-to-SQL startup, fresh out of YC or having just raised a big seed round etc, pivot now or waste your capital. You will never be the best at your own method - the data clouds and hyperscalers have a moat that you can’t and won’t ever have. You’re stuck between a better method on one side and sheer power on the other.

You might say that Snowflake’s Copilot isn’t a BI tool and isn’t looking to solve for self-serve, but read their new CEO’s words carefully, below. My new role at Cube, as VP of AI, is primarily to bring AI features into Cube’s products. Before Ramaswamy was CEO at Snowflake, he had a short-lived role with a similar purpose - just at a much larger scale. His move to CEO1 signals that bringing AI features into Snowflake isn’t a side priority for Snowflake any more - it’s the big game now.

We are invested in AI because we think it is going to make the creation of end-to-end AI products much better. Our dream here is like an API that our customers can use so that business users can directly talk to data. That’s the ultimate goal of Snowflake - democratized data within the enterprise. And we think this is a really important part of making that vision come true.2

The goal of “democratized data within the enterprise” sounds very similar to Looker’s goal a few years ago before the acquisition - or any BI tool, in all honesty. Snowflake will have to tread a careful path not to compete with their ecosystem and BI tools within it, but at the same time can’t afford for GCP and DBRX to get ahead of them in this regard. Business users want to use AI with data, that much is clear. They are tired of waiting for data teams and want a chatGPT-style experience.

Business users don’t usually go into the Snowflake platform today, except perhaps for the odd Streamlit app. If Snowflake expect to serve business users, this will be a big change in UI/UX or meeting them where they are - perhaps in other platforms, like Salesforce or BI tools.

Will Snowflake’s text-to-SQL ever be good enough to beat text-to-SL? I don’t think so, but I saw something that made me wonder if they’ve made their own steps towards text-to-SL under the hood.

When I first uploaded the ACME_insurance data model into a Snowflake schema (currently you can only ask questions on one schema’s data), I tried to immediately ask a question using Copilot, but got this message:

COPILOT

We are in the process of indexing the tables and views in the selected database and schema. Please try again later.

This hints at a fairly sophisticated RAG system, where field and table names are probably being vectorised, but, as you saw from the plan Copilot made before generating the query, it seemed to have knowledge of the structure of the data without being told - an inferred knowledge graph from natural join keys etc. Bear in mind, this is all my conjecture about inside the box from looking at the outside - the reality could be very different.

This would explain why it was able to answer more questions than a regular text-to-SQL tool, beyond the training dataset advantage. In the data.world benchmark, their knowledge graph method for querying performed much better than text-to-SQL, 54% accuracy vs 17%.

I believe the end of the road for text-to-KG is text-to-SL - once you hit the limits of the context provided by the knowledge graph alone, you realise you need the additional constraints that the special knowledge graph for querying data, the semantic layer, provides.

Frank Slootman comes for us all, even Frank Slootman

https://venturebeat.com/data-infrastructure/snowflake-launches-arctic-an-open-mixture-of-experts-llm-to-take-on-dbrx-llama-3/