Jordan Tigani wrote a great piece last week about how we focus too much on query performance and benchmarks, when the real metric that matters is time from idea to answer.

None of the most successful database companies got that way by being faster than their competitors. Redshift was king for a while, and the thing that let Snowflake in the door was maintainability, not performance on benchmarks. Databases whose primary selling point was performance did not perform well in the market. Databases who made it easy to get jobs done fared a lot better.

If you think about the idea that making things easier is more important than outright performance, this makes a lot of sense - the cost of labour is much higher than SaaS and cloud spend. You wouldn’t choose a data warehouse based on a pure speed or cost benchmark - it’s more important that it integrates well with the other tools that you use. It’s more important that it’s easy for your team to leverage and deploy.

Focusing on time from idea to answer, it is certainly true that having a data warehouse that is well-integrated and easy to use does help get to answers more quickly. If you have to worry about infrastructure concerns like whether running a query will cause your server to run out of memory and fall over, it means the overhead of trying something out takes much longer than the actual thing you wanted to do.

A while ago, I wrote about how impressed I was that BigQuery lets you query data from PubSub topics. In another stack, this would require a whole ETL pipeline to be built, where the data engineers would be wrangling Kafka, getting the events to be stored in a data lake and then ingested into a data warehouse. GCP allowing BigQuery to query PubSub topics directly (probably by abstracting the typical ETL someone might do otherwise), has exponentially reduced time from idea to answer in this instance. The time saving is so much larger than could have been achieved by even making the query execute 100x faster. Software engineer sends events to the PubSub topic, analytics engineer picks it up as a dbt source… removing the data engineer from the workflow entirely. If data engineering was under-resourced (it always is), it could take months for the ETL pipeline to be built from Kafka to data warehouse.

Semantics are one leg of the journey

One of the other human time costs that is a sub-component of time from idea to answer, is composing the query. Understanding how to write a query to answer a question, when directly writing SQL on a schema, requires the analyst to understand the data model: which tables join to which, using which keys, which columns to aggregate to generate metrics, which columns to group by to expose dimensions of interest… In data, this is typically defined in semantics.

Semantics (from Ancient Greek σημαντικός (sēmantikós) 'significant')[a][1] is the study of reference, meaning, or truth. The term can be used to refer to subfields of several distinct disciplines, including philosophy, linguistics and computer science…

…Computational semantics is focused on the processing of linguistic meaning. In order to do this, concrete algorithms and architectures are described. Within this framework the algorithms and architectures are also analysed in terms of decidability, time/space complexity, data structures that they require and communication protocols.[20]…

…The Semantic Web refers to the extension of the World Wide Web via embedding added semantic metadata, using semantic data modeling techniques such as Resource Description Framework (RDF) and Web Ontology Language (OWL). On the Semantic Web, terms such as semantic network and semantic data model are used to describe particular types of data model characterized by the use of directed graphs in which the vertices denote concepts or entities in the world and their properties, and the arcs denote relationships between them. These can formally be described as description logic concepts and roles, which correspond to OWL classes and properties.[25]1

Semantics bridge the gap between the idea and the resources to answer, with data. Semantics are old - as old as language. With the Ancient Greeks being amongst the first to study it as a field, and it existing in what is now ancient computing.

As quoted above, semantics is a subfield of both linguistics and CS, which is probably why it is the perfect bridge between the two. Computational semantics, as described above, covers a huge part of what LLMs are focused on today. It’s no wonder that augmenting LLMs with semantics drives huge performance boosts.

Coming back to the SQL query, let’s take a look at a simple example. We want to find out all of our user names. Well, looking through the schema, there appears to be a users table. Looking at the table, it has a name field. Then surely the query is as simple as:

SELECT users.name FROM users

Hmm, sometimes the name for a user is null. That’s not great, is there any way of filling in the gaps? I’ll go and ask an engineer who knows about the user service. He’s in a meeting… I’ll come back after lunch… Ah, he’s finally back at his desk/not busy on Slack! He says that sometimes the user is created in circumstances without all the fields - typical edge case. He also says that if we collect the name later, it will be stored in the contacts table, which can be joined to the users table.

So let’s go look at the contacts table. The contacts table does indeed have a name field, but how do I join it to the users table? The contacts table only seems to have an id field, which is its primary key and has no foreign key related the users table like user_id.

Let’s see if it works the other way and there is a foreign key in the users table, leading back to the contacts table. Ah yes, there does seem to be a contact_id field, that looks promising! There is no documentation to confirm this is how to join these tables together, though. Let’s test it:

Is it possible for a contact_id in the users table to not exist as a primary key in the contacts table?

SELECT users.contact_id

FROM users

LEFT JOIN contacts

ON users.contact_id = contacts.id

WHERE contacts.id is NULL

No results, as expected. Let’s do one more test. Is it possible for the name in the users table to be different to the name in the contacts table, where both exist?

SELECT users.name, contacts.name

FROM users

INNER JOIN contacts on users.contact_id = contacts.id

WHERE contacts.name is not NULL

AND users.name is not NULL

AND users.name <> contacts.name

There are a few results… why does this happen? Let’s go and ask the engineer again, I imagine this is going to be a new and exciting edge case 🫠. He’s AFK, it’ll have to wait till the morning… Apparently it is possible for the user to change their name if it was captured incorrectly (some source systems accidentally map surname and first name the wrong way round into our backend). What can happen is that the fix will be input and apply to the users table, but not to the contacts table - therefore, favour the name from the users table if you have it.

SELECT COALESCE(users.name, contacts.name) users__name

FROM users

LEFT JOIN contacts

ON users.contact_id = contacts.id

This has taken a couple of days to answer a simple question. I’m going to write down what I found out in a Google Doc, so the next person to do this will find it (haha of course they won’t, because I’ll probably have left the company by then and IT will have deleted my login and any docs that I created that others haven’t copied).

I know this is a contrived situation, and on the second time being asked this question, the analyst will refer to their previous code and do the job in minutes rather than days. However, analysts are often writing novel code to do work more complicated than this. The semantic overhead from constructing this query was about two days… let’s call it 200k seconds. Pretty much any database or data warehouse would have been able to pull the results in less than a second, once the query had been correctly written. In this case, the semantic overhead in time could be a million times larger than the query performance overhead.

Whether our DWH ran the query 10 times faster or cheaper than another one or the replica of the prod db we sometimes query (we have the creds on a post-it stuck under our runners up trophy for tug-of-war at the last company sports day, but don’t tell anyone that 🤫), is quite frankly irrelevant. The analyst above has run many queries to get to the one they needed, all costing money on cloud data warehouses.

Bear in mind we’ve also distracted an engineer twice to learn the semantics to build the query, and when we leave, approximately 17 months after joining, our replacement will repeat the process after cursing us for not leaving a Google Doc with this kind of information.

This semantic overhead is looking like a cost line we need to reduce with extreme prejudice.

Semantic Layers can consistently and massively reduce the semantic overhead component of time from idea to answer

If you’ve read my blog for a while, you’ll know I’ve written a fair bit already about semantic layers - which are pieces of technology used to abstract entities and their metrics, measures and attributes from data structures. Therefore allowing quick, safe, easy and cheap2 access to already defined abstractions on top of data.

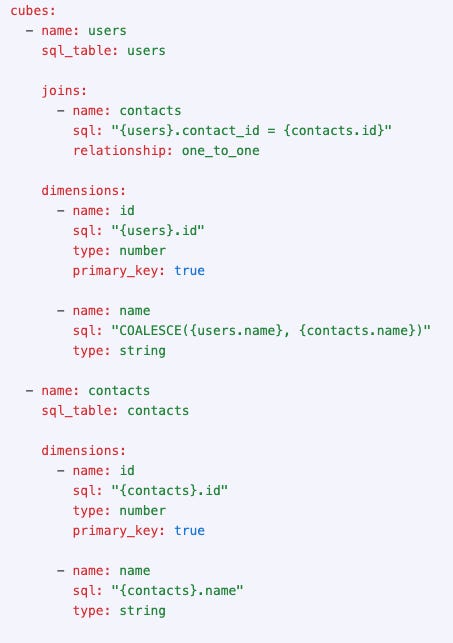

The semantic layer code for the scenario above is as follows:

It’s not vastly more complex than the query - most of the additional code is adding metadata which, once committed, is permanently available, version controlled and enacted for future use. In many ways, it’s easier to read for non-technical users than the SQL.

As the assertions like data type, primary key, referential integrity and object existence are all explicit from the metadata input, they can be tested automatically too. This helps keep the semantic layer correct where static code or notes in a Google doc wouldn’t be.

Any future user or analyst who wants the names of users need only request user.name from any of the API types the semantic layer offers or tools it integrates with (eg Excel, BI tool, Reverse ETL…). I specifically mentioned analyst there because where a semantic layer has been in place, I’ve seen analysts who were capable of writing the SQL needed to get to an answer instead use the semantic layer to save time/cost and avoid errors.

This is why investing in a semantic layer makes more sense than an expensive migration from your current data warehouse to a more expensive one that is potentially faster, according to “performance benchmarks”. You could keep your current data warehouse and get a huge time saving without needing to remodel your data - you can use the semantic layer to abstract your logical data model to something more intuitive.

Oftentimes, these data warehouse migrations and comparisons happen because the current tool a company has is struggling in some way. Semantic layers have caching features, some of which are truly state-of-the-art, which can also mitigate these problems in the existing data warehouse. However, this only really works for standalone semantic layers, where nearly all read access for the data warehouse is via the semantic layers’ APIs and therefore has a chance of hitting the cache.

I have run a Redshift to Snowflake migration at a company where Looker was in place. Looker’s caching feature wasn’t enough to stop Redshift from experiencing concurrency issues, in part because a great deal of Redshift queries and transactions were not via Looker.

All of the benefits I’ve described above really make a semantic layer a product which has a huge ROI, far greater than their cost, far greater than a database migration.

https://en.wikipedia.org/wiki/Semantics#

If a query can be answered from a semantic layer rather than rolling your own SQL, you can save a huge amount of analyst time. Analysts, when writing SQL queries from scratch, run many queries to test their assumptions about the data, and that their queries actually work at many points during the development process - in the cloud era, this costs money. It’s also easy for an analyst to accidentally forget to filter their query, leading to a full table scan of a large table 🤑. Semantic layers can have advanced caching features, which also can reduce data warehouse cloud costs significantly while also hugely reducing latency, where the cache is hit.